2FA (Two-Factor Authentication): Privacy Concerns and Unethical Practices

Two-factor authentication (2FA) has gained widespread recognition as a vital tool in enhancing online security. While its primary goal is to protect user accounts from unauthorized access, there exists a darker side to 2FA that raises privacy concerns and the potential for unethical practices by developers and companies. This essay delves into the myriad of nefarious scenarios and usage scenarios that can compromise the privacy of end-users.

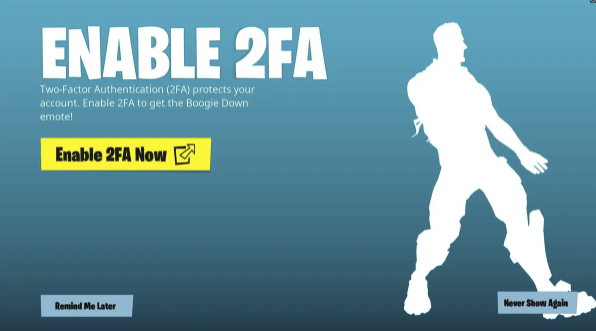

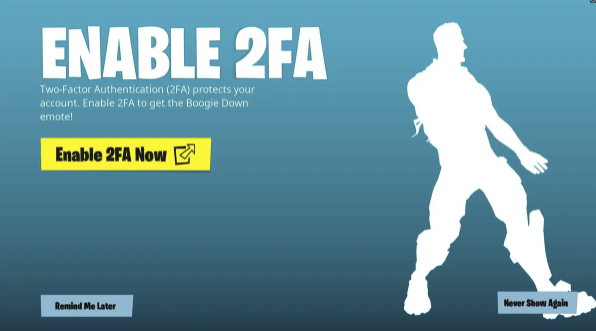

A Solid Example of Suspicious Attempts to get You to Opt-in to 2 Factor Authentication and connect your phone with other devices;

Fortnite 2 Factor Authentication Opt In Scam

Fortnite using the “Boogie Down” emote offer to encourage users to enable 2FA is in my opinion, a notable example of how companies leverage incentives to enhance security while also gathering valuable user data. By enticing users to enable 2FA through rewards such as in-game items, Fortnite claims it not only enhances account security but also gains insights into user behavior across multiple devices. This strategy is officially supposed to help the company better understand its player base and potentially improve the overall gaming experience. But it can also be used to manipulate the user by getting them addicted to DLCs, Avatars, Extras, and other merchandise, addons, and products which they know the user won’t be able to resist.

Here are ten possible scenarios where a worldwide AAA online Mass Multiplayer game company, like Fortnite, might use aggressive tactics to encourage users to opt-in to 2FA and then potentially abuse the data or manipulate consumers:

- Data Harvesting for Advertising: The company may collect data on user behavior across multiple devices, creating detailed profiles to serve highly targeted advertisements, thereby increasing advertising revenue.

- In-Game Purchase Manipulation: By tracking user interactions, the company could manipulate in-game offers and discounts to encourage additional in-game purchases, exploiting users’ preferences and spending habits.

- Content Addiction and Spending: The company might use behavioral insights to design content and events that exploit users’ tendencies, keeping them engaged and spending money on downloadable content (DLCs) and microtransactions.

- Influence on Game Balancing: Data gathered through 2FA could influence game balancing decisions, potentially favoring players who spend more or exhibit specific behaviors, leading to unfair gameplay experiences.

- Pushing Subscription Services: The company may use behavioral data to identify potential subscribers and relentlessly promote subscription services, driving users to sign up for ongoing payments.

- Social Engineering for User Engagement: Leveraging knowledge of players’ habits, the company could employ social engineering techniques to manipulate users into promoting the game to friends, potentially leading to more players and revenue.

- Tailored Product Launches: The company might strategically time and tailor product launches based on user behavior, encouraging purchases at specific intervals, even if users hadn’t planned to buy.

- Personalized Content Restrictions: Behavioral data could be used to selectively restrict content or features for users who don’t meet certain criteria, pushing them to spend more to unlock these features.

- Cross-Promotion and Monetization: The company could collaborate with other businesses to cross-promote products or services to users based on their tracked preferences, generating additional revenue streams.

- Reward Manipulation: The company may adjust the distribution of in-game rewards based on user behavior, encouraging users to spend more time and money on the platform to earn desired items.

Fortnite 2FA Emote Opt In Trick

These scenarios emphasize the potential for companies to use aggressive tactics and data collection through 2FA to maximize profits, often at the expense of user privacy and potentially manipulating consumer behavior for financial gain. It underscores the importance of user awareness and informed decision-making when it comes to opting in to 2FA and sharing personal data with online gaming platforms. However, it’s crucial for users to be aware of the data collection practices associated with such incentives and understand how their information may be used. Transparency and clear communication regarding data usage are essential to maintain trust between users and the platform. In this context, users should consider the trade-off between the benefits of enhanced security and potential data collection, making informed decisions about whether to enable 2FA based on their preferences and concerns regarding privacy and data usage.

1. Data Profiling and Surveillance

One of the most ominous aspects of 2FA implementation is the potential for data profiling and surveillance. Companies can leverage 2FA as a means to collect extensive user data, including device locations, usage patterns, and behavioral data. This information can be used for targeted advertising, behavioral analysis, and potentially even sold to third parties without user consent. To Illustrate, here are 10 possible nefarious scenarios where 2FA (Two-Factor Authentication) could be exploited for unethical purposes or invasion of privacy:

- Location Tracking: Companies could use 2FA to continuously track the location of users through their devices, building detailed profiles of their movements for intrusive marketing purposes.

- Behavioral Profiling: By analyzing the times and frequency of 2FA logins, companies could build extensive behavioral profiles of users, potentially predicting their actions and preferences.

- Data Correlation: Combining 2FA data with other user information, such as browsing habits and social media interactions, could enable companies to create comprehensive dossiers on individuals, which may be sold or used without consent.

- Phishing Attacks: Malicious actors might exploit 2FA to gain access to users’ personal information, tricking them into revealing their second authentication factor through fake login screens.

- Targeted Ads: Companies could leverage 2FA data to bombard users with highly targeted and invasive advertisements based on their recent activities and location history.

- Surveillance Capitalism: 2FA data could be used to monitor users’ offline activities, creating a complete picture of their lives for profit-driven surveillance capitalism.

- Third-Party Sales: Without proper safeguards, companies might sell 2FA data to third parties, potentially leading to further unauthorized use and misuse of personal information.

- Blackmail: Malicious entities could use 2FA information to threaten individuals with the exposure of sensitive data, extorting money or personal favors.

- Stalking: Stalkers and abusers could exploit 2FA to track and harass their victims, using location and behavioral data to maintain control.

- Government Surveillance: In some cases, governments may pressure or require companies to provide 2FA data, enabling mass surveillance and privacy violations on a massive scale.

These scenarios emphasize the importance of strong data protection laws, ethical use of personal data, and user consent when implementing 2FA systems to mitigate such risks.

2. Government Demands for Access

In some cases, governments or malicious actors may exert pressure on companies to gain access to 2FA data for surveillance purposes. This can infringe upon individuals’ privacy rights and result in unauthorized surveillance on a massive scale. Once more to Illustrate, here are 10 possible nefarious scenarios where government demands for access to 2FA data could be exploited for unethical purposes or invasion of privacy:

- Political Targeting: Governments may use access to 2FA data to identify and target political dissidents, activists, or opposition members, leading to surveillance, harassment, or even imprisonment.

- Mass Surveillance: Governments could implement widespread 2FA data collection to surveil entire populations, creating a culture of constant monitoring and chilling freedom of expression.

- Suppression of Free Speech: The threat of government access to 2FA data could lead to self-censorship among citizens, inhibiting open discourse and free speech.

- Blackmail and Extortion: Corrupt officials might use 2FA data to gather compromising information on individuals and then use it for blackmail or extortion.

- Journalist and Source Exposure: Investigative journalists and their sources could be exposed, endangering press freedom and the ability to uncover corruption and misconduct.

- Discrimination and Profiling: Governments could use 2FA data to discriminate against certain groups based on their religious beliefs, ethnicity, or political affiliations.

- Political Leverage: Access to 2FA data could be used to gain leverage over individuals in positions of power, forcing them to comply with government demands or risk exposure.

- Invasive Border Control: Governments might use 2FA data to track individuals’ movements across borders, leading to unwarranted scrutiny and profiling at immigration checkpoints.

- Health and Personal Data Misuse: Government access to 2FA data could lead to unauthorized collection and misuse of individuals’ health and personal information, violating medical privacy.

- Illegal Detention: Misuse of 2FA data could result in wrongful arrests and detentions based on false or fabricated evidence, eroding the principles of justice and due process.

Governments may make demands for access to various types of data and information for a variety of reasons, often within the framework of legal processes and national security concerns. Here’s an explanation of how and why governments may make demands for access:

- Legal Frameworks: Governments establish legal frameworks and regulations that grant them the authority to access certain types of data. These laws often pertain to national security, law enforcement, taxation, and other public interests. Examples include the USA PATRIOT Act in the United States and similar legislation in other countries.

- Law Enforcement Investigations: Government agencies, such as the police or federal law enforcement agencies, may request access to data as part of criminal investigations. This can include access to financial records, communication logs, or digital evidence related to a case.

- National Security Concerns: Governments have a responsibility to protect national security, and they may seek access to data to identify and mitigate potential threats from foreign or domestic sources. Access to communication and surveillance data is often critical for these purposes.

- Taxation and Financial Oversight: Government tax authorities may demand access to financial records, including bank account information and transaction history, to ensure compliance with tax laws and regulations.

- Public Safety and Emergency Response: In emergency situations, such as natural disasters or public health crises, governments may access data to coordinate response efforts, locate missing persons, or maintain public safety.

- Counterterrorism Efforts: Governments may seek access to data to prevent and investigate acts of terrorism. This includes monitoring communication channels and financial transactions associated with terrorist organizations.

- Regulatory Compliance: Certain industries, such as healthcare and finance, are heavily regulated. Governments may demand access to data to ensure compliance with industry-specific regulations, protect consumer rights, and prevent fraudulent activities.

- Protection of Intellectual Property: Governments may intervene in cases of intellectual property theft, counterfeiting, or copyright infringement, demanding access to data to support legal actions against violators.

- Surveillance Programs: Some governments conduct surveillance programs to monitor digital communications on a large scale for national security reasons. These programs often involve partnerships with technology companies or data service providers.

- Access to Social Media and Online Platforms: Governments may request data from social media platforms and online service providers for various purposes, including criminal investigations, monitoring extremist content, or preventing the spread of misinformation.

It’s important to note that the extent and nature of government demands for access to data vary from one country to another and are subject to local laws and regulations. Moreover, the balance between national security and individual privacy is a contentious issue, and debates often arise around the scope and limits of government access to personal data. Consequently, governments must strike a balance between legitimate security concerns and the protection of individual rights and privacy.

These scenarios highlight the critical need for strong legal protections, oversight mechanisms, and transparency regarding government access to sensitive data like 2FA information to safeguard individual rights and privacy.

3. Exploiting Data Breaches

Data breaches are an unfortunate reality in today’s digital age. Even with the best intentions, companies can experience breaches that expose user information, including 2FA data. Malicious individuals may exploit these breaches for identity theft, fraud, or other illegal activities. To make the risks understandable, here are 10 possible nefarious scenarios where data breaches, including the exposure of 2FA data, could be exploited for unethical purposes, criminal activities, or invasion of privacy:

- Identity Theft: Malicious actors could use stolen 2FA data to impersonate individuals, gain unauthorized access to their accounts, and commit identity theft for financial or personal gain.

- Financial Fraud: Access to 2FA data may allow criminals to initiate fraudulent financial transactions, such as draining bank accounts, applying for loans, or making unauthorized purchases.

- Account Takeover: Hackers could compromise various online accounts by bypassing 2FA, potentially gaining control over email, social media, or even cryptocurrency wallets.

- Extortion: Criminals might threaten to expose sensitive information obtained from data breaches unless victims pay a ransom, leading to extortion and emotional distress.

- Stalking and Harassment: Stolen 2FA data could be used to track and harass individuals, invading their personal lives and causing significant emotional harm.

- Illegal Brokerage of Data: Criminal networks could sell stolen 2FA data on the dark web, leading to further exploitation and unauthorized access to personal information.

- Healthcare Fraud: 2FA breaches in healthcare systems could result in fraudulent medical claims, endangering patient health and privacy.

- Corporate Espionage: Competing businesses or nation-states could exploit 2FA breaches to gain sensitive corporate information, such as trade secrets or research data.

- Social Engineering: Criminals might use stolen 2FA data to manipulate victims, convincing them to disclose additional sensitive information or perform actions against their will.

- Reputation Damage: The release of personal information from data breaches, including 2FA details, could tarnish an individual’s reputation and lead to long-lasting consequences in both personal and professional life.

These scenarios underscore the critical importance of robust cybersecurity measures, rapid breach detection and response, and user education on safe online practices to mitigate the risks associated with data breaches and protect individuals’ privacy and security.

4. Phishing Attacks

Cybercriminals can manipulate 2FA processes as part of phishing attacks. By posing as legitimate entities, attackers may request 2FA codes to gain unauthorized access to user accounts, exposing sensitive information to malicious intent. To demonstrate the possible ways this can be implemented, here are 10 possible nefarious scenarios where phishing attacks, including the manipulation of 2FA processes, could be implemented for various goals, gains, or purposes:

- Corporate Espionage: Phishers could target employees of a competitor, posing as colleagues or executives, to extract sensitive corporate information, trade secrets, or proprietary data.

- Identity Theft: Attackers might impersonate a user’s bank, government agency, or social media platform to steal personal information, such as Social Security numbers or login credentials, for identity theft.

- Financial Fraud: Phishers could send fake 2FA requests while posing as financial institutions, tricking victims into revealing their codes and gaining access to bank accounts or investment portfolios.

- Political Disinformation: In politically motivated phishing campaigns, attackers may pose as news organizations or government agencies to spread false information, manipulate public opinion, or influence elections.

- Ransomware Deployment: Phishers could deliver ransomware payloads after convincing victims to input their 2FA codes, locking them out of their systems and demanding payment for decryption.

- Data Breach Access: Malicious actors might use phishing to gain access to employees’ email accounts within an organization, which could lead to a data breach or the theft of sensitive company data.

- Fraudulent Transactions: Attackers posing as e-commerce websites or payment processors could trick users into approving unauthorized transactions using manipulated 2FA prompts.

- Credential Harvesting: Phishers could target university or corporate email accounts to harvest login credentials, gaining access to academic research, intellectual property, or confidential documents.

- Social Media Takeover: By sending fake 2FA requests from popular social media platforms, attackers could gain control of users’ accounts, spreading false information or conducting cyberbullying campaigns.

- Government Infiltration: Nation-state actors might use phishing attacks to compromise government employees’ accounts, potentially gaining access to classified information or influencing diplomatic relations.

These examples highlight the importance of user education, email filtering, and multi-layered security measures to detect and prevent phishing attacks that exploit 2FA processes for various malicious purposes.

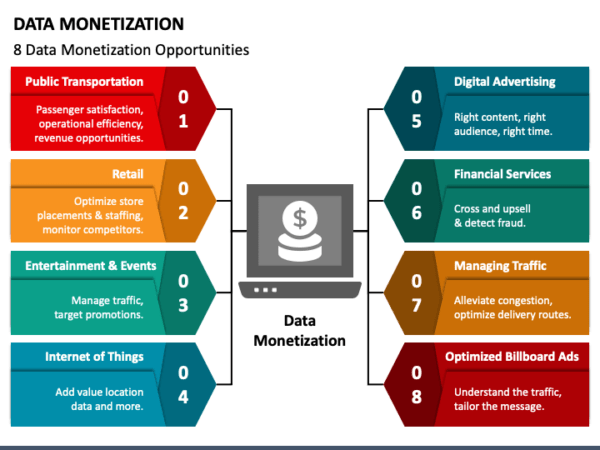

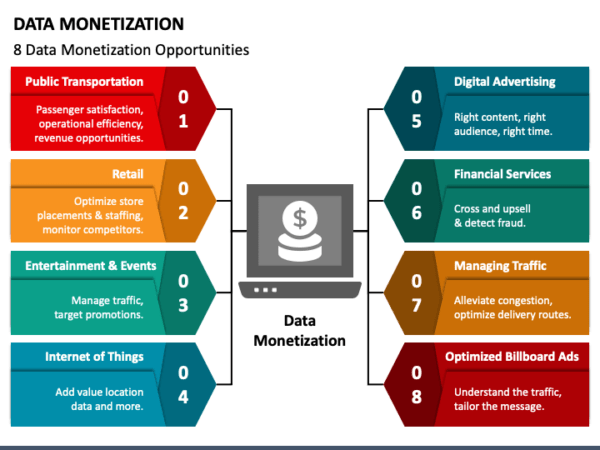

Visual mind map of the architecture of data monetization

5. Monetization of User Data

Some companies may prioritize data monetization over user privacy. By pushing for 2FA, these entities gather more valuable user information that can be monetized through various channels, without users fully understanding the extent of data collection. To help the reader understand this, I will give 10 examples of possible nefarious scenarios that illustrate the extent and depth to which personal information can be brokered in the User-Data Brokerage Industry:

- Detailed Financial Profiles: Data brokers compile extensive financial profiles of individuals, including income, spending habits, investment preferences, and debt levels. This information can be sold to financial institutions for targeted marketing and credit assessments.

- Behavioral Predictions: By analyzing user behavior, data brokers create predictive models that forecast individuals’ future actions, such as purchasing decisions, travel plans, or lifestyle changes. This data is valuable for advertisers and marketers.

- Healthcare Histories: Data brokers may obtain and sell sensitive health information, including medical conditions, prescription histories, and insurance claims, potentially leading to discriminatory practices in insurance or employment.

- Legal Records: Personal legal records, such as criminal histories, lawsuits, and court judgments, can be collected and sold, affecting an individual’s reputation and opportunities.

- Political Affiliations: Data brokers gather data on users’ political beliefs, affiliations, and voting histories, which can be exploited for political campaigns or voter suppression efforts.

- Psychological Profiles: User data is used to create psychological profiles, revealing personality traits, emotional states, and vulnerabilities, which can be leveraged for targeted persuasion or manipulation.

- Relationship Status and History: Personal information about relationships, including marital status, dating history, and family dynamics, can be exploited for advertising, relationship counseling, or even blackmail.

- Job Performance: Data brokers collect employment records, performance evaluations, and work history, which can impact career opportunities and job offers.

- Travel and Location History: Brokers track users’ travel history, including destinations, frequency, and preferences, which can be used for targeted travel-related advertising or even surveillance.

- Education and Academic Records: Academic records, degrees earned, and educational achievements are collected and sold, potentially affecting job prospects and educational opportunities.

These scenarios underscore the ethical concerns surrounding the extensive data collection and monetization practices of data brokers and the need for robust data protection regulations and transparency to safeguard individual privacy and prevent abuse.

6. Intrusive Tracking and Profiling

2FA can enable companies to build detailed profiles of users, including their habits, preferences, and locations. This intrusive tracking and profiling can be used to manipulate user behavior and extract further data, all without transparent consent. So heads up, and educate yourselves! To assist you with this, here are ten examples of how companies, advertisers, governments, or independent parties with special interests might use or abuse intrusive tracking and profiling technologies to manipulate human behavior for specific desired results:

- Targeted Advertising: Companies can use detailed user profiles to deliver highly personalized advertisements that exploit individuals’ preferences, making them more likely to make impulse purchases.

- Political Manipulation: Governments or political campaigns may leverage profiling to identify and target voters with tailored messages, swaying public opinion or voter behavior.

- Behavioral Addiction: App and game developers might use user profiles to design addictive experiences that keep individuals engaged and coming back for more, generating ad revenue or in-app purchases.

- Surveillance and Social Control: Governments can employ profiling to monitor citizens’ activities, stifling dissent or controlling behavior through the fear of being watched.

- Credit Scoring and Discrimination: Financial institutions may use profiling to assess creditworthiness, potentially discriminating against individuals based on factors like shopping habits or online activities.

- Healthcare Manipulation: Health insurers could adjust premiums or deny coverage based on profiling data, discouraging individuals from seeking necessary medical care.

- Manipulative Content: Content providers may use profiles to serve content designed to provoke emotional responses, encouraging users to spend more time online or share content with others.

- Employment Discrimination: Employers might make hiring decisions or promotions based on profiling data, leading to unfair employment practices.

- Criminal Investigations: Law enforcement agencies can use profiling to target individuals for investigation, potentially leading to wrongful arrests or harassment of innocent people.

- Reputation and Social Standing: Profiling data can be used to tarnish an individual’s reputation, either through targeted character assassination or by uncovering potentially embarrassing personal information.

These examples highlight the ethical concerns associated with intrusive tracking and profiling technologies and the potential for manipulation and abuse by various entities. It underscores the importance of strong data protection laws, transparency, and user consent in mitigating such risks and protecting individual privacy and autonomy.

7. Phone Number Compromise and Security Risks

When a network or service requires a phone number for two-factor authentication (2FA) and their database is compromised through a data breach, it can lead to the exposure of users’ phone numbers. This scenario opens users up to various security risks, including:

- Phishing Attacks: Hackers can use exposed phone numbers to craft convincing phishing messages, attempting to trick users into revealing sensitive information or login credentials.

- Unwanted Advertising: Once hackers have access to phone numbers, they may use them for spam messages and unwanted advertising, inundating users with unsolicited content.

- Scam Phone Calls: Phone numbers exposed through a data breach can be targeted for scam phone calls, where malicious actors attempt to deceive users into providing personal or financial information.

- SIM Swapping: Hackers can attempt to perform SIM swapping attacks, where they convince a mobile carrier to transfer the victim’s phone number to a new SIM card under their control. This allows them to intercept 2FA codes and gain unauthorized access to accounts.

- Identity Theft: Exposed phone numbers can be used as a starting point for identity theft, with attackers attempting to gather additional personal information about the user to commit fraud or apply for loans or credit cards in their name.

- Harassment and Stalking: Malicious individuals may use the exposed phone numbers for harassment, stalking, or other forms of digital abuse, potentially causing emotional distress and safety concerns for victims.

- Social Engineering: Attackers armed with users’ phone numbers can engage in social engineering attacks, convincing customer support representatives to grant access to accounts or change account details.

- Voice Phishing (Vishing): Exposed phone numbers can be used for voice phishing, where attackers impersonate legitimate organizations or authorities over phone calls, attempting to manipulate victims into revealing sensitive information.

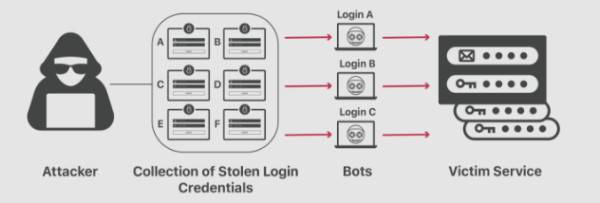

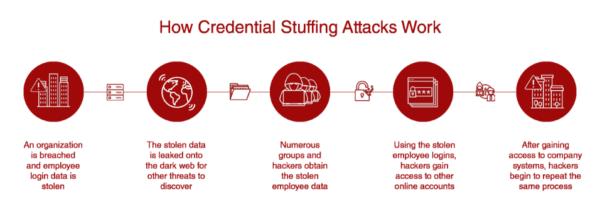

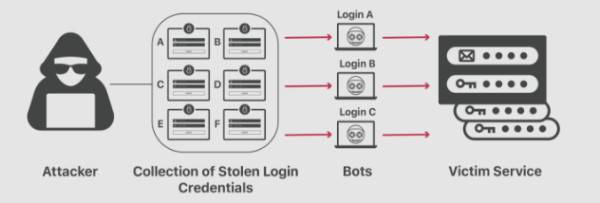

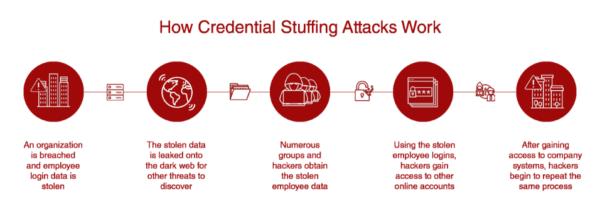

- Credential Stuffing: Attackers may attempt to use the exposed phone numbers in combination with other stolen or leaked credentials to gain unauthorized access to various online accounts, exploiting reused passwords.

- Data Aggregation: Exposed phone numbers can be aggregated with other breached data, creating comprehensive profiles of individuals that can be used for further exploitation, fraud, or identity-related crimes.

How Credential Stuffing is Done

These security risks highlight the importance of robust security practices, such as regularly updating passwords, monitoring accounts for suspicious activity, and being cautious of unsolicited messages and calls, to mitigate the potential consequences of phone number exposure in data breaches, and should be considered a possible security vulnerability. I believe this underscores the importance of securing both personal information and phone numbers, as the compromise of this data can have far-reaching consequences beyond the immediate breach. It also emphasizes the need for alternative methods of 2FA that don’t rely solely on phone numbers to enhance security while protecting user privacy.

In Summary;

While two-factor authentication is often portrayed as a security measure aimed at safeguarding user accounts, it is crucial to recognize the potential for misuse and unethical practices. The dark scenarios presented here underscore the need for users to be vigilant about their online privacy, understand the implications of enabling 2FA, and make informed decisions about how their data is used and protected in the digital realm. As technology continues to evolve, the battle between privacy and security remains a central concern, and it is essential for users to stay informed and proactive in safeguarding their personal information.

The idea i believe, is that Facebook can create masses of automated content and overtake all the other major domains with all types of media (in this case, video, which would directly affect YouTube, which

The idea i believe, is that Facebook can create masses of automated content and overtake all the other major domains with all types of media (in this case, video, which would directly affect YouTube, which